We built our marketing site with AI. First with Lovable, then with Claude Code, and we used Tailor on it the entire time. Nine months later, the site has professional SEO, a help chatbot, automated OG images, SSO-gated admin tools, and a full testing and measurement stack.

But the real unlock wasn't building faster. It was learning faster. AI made page creation cheap. The new bottleneck is deciding what each visitor should see, measuring what works, and improving fast enough to keep up with your ad spend.

Here's how we did it.

Lovable showed us the future

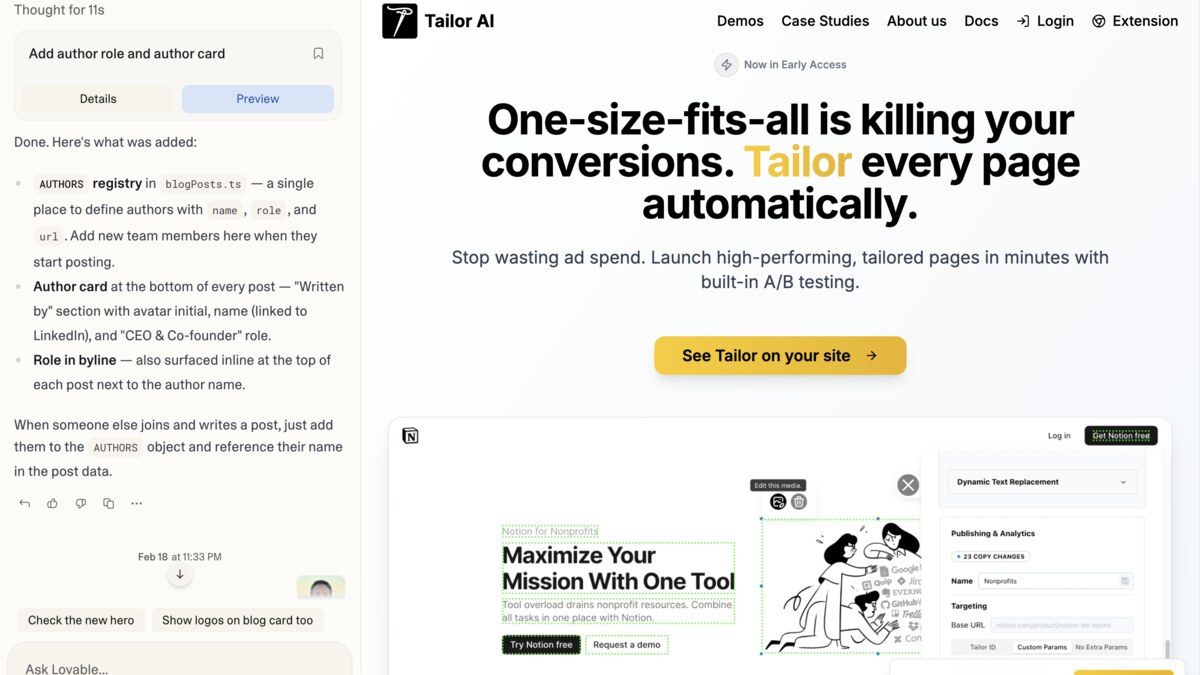

Our first version came from Lovable, and I'm still a big fan. Lovable showed me the power of true AI-driven web development, with a full build and eval loop, long before I saw anything like it elsewhere. In a way, we modeled Tailor after Lovable, making our page tailoring tools the "Lovable for existing websites."

It got us from zero to a credible site fast: homepage, feature pages, docs, blog, team page, waitlist flow. Real momentum around design and copy. For marketers, this is a shift. You can get to something real quickly enough to test positioning and learn, instead of waiting on long engineering or agency cycles.

Adding Tailor on top of Lovable

We started using Tailor on our own site early, and that changed everything. Adding Tailor on top of the Lovable-built site let us keep going for another six months before we needed to change anything about the underlying stack.

What we started doing with Tailor:

- Extensive headline and CTA testing (A/B and multivariate)

- Understanding visitor demographics via IP enrichment

- Tailoring pages to Google and Meta ads via UTM parameters

- Matching pages to social post intent

- Site traffic and campaign insights with automatic alerts

- Connecting page CTAs to downstream conversion goals via Amplitude

A static site teaches you slowly. A tailored and tested site teaches you faster. That was already clear at this stage.

The wall: "looks good" is not the same as ready for scale

Eventually we hit the predictable wall. Not because Lovable failed, but because our needs outgrew what a prompt-first site builder could handle.

The main driver was SEO. We needed professional-grade technical SEO, and Lovable's single-page app architecture made that hard. Moving to Vercel also unlocked backend capabilities we couldn't build before: automated OG image generation for every page, a support chatbot for our docs, SSO-gated admin tooling, and real deployment automation.

This is the normal tradeoff of prompt-first site generation. AI can get you to "looks good" very fast. It does not automatically get you to "ready for everything you'll need as you scale."

And once paid traffic is involved, those details stop being pedantic. Bad foundations don't just slow you down, they make your results harder to trust. The team starts debating data instead of learning from it.

Claude Code unlocked the next level

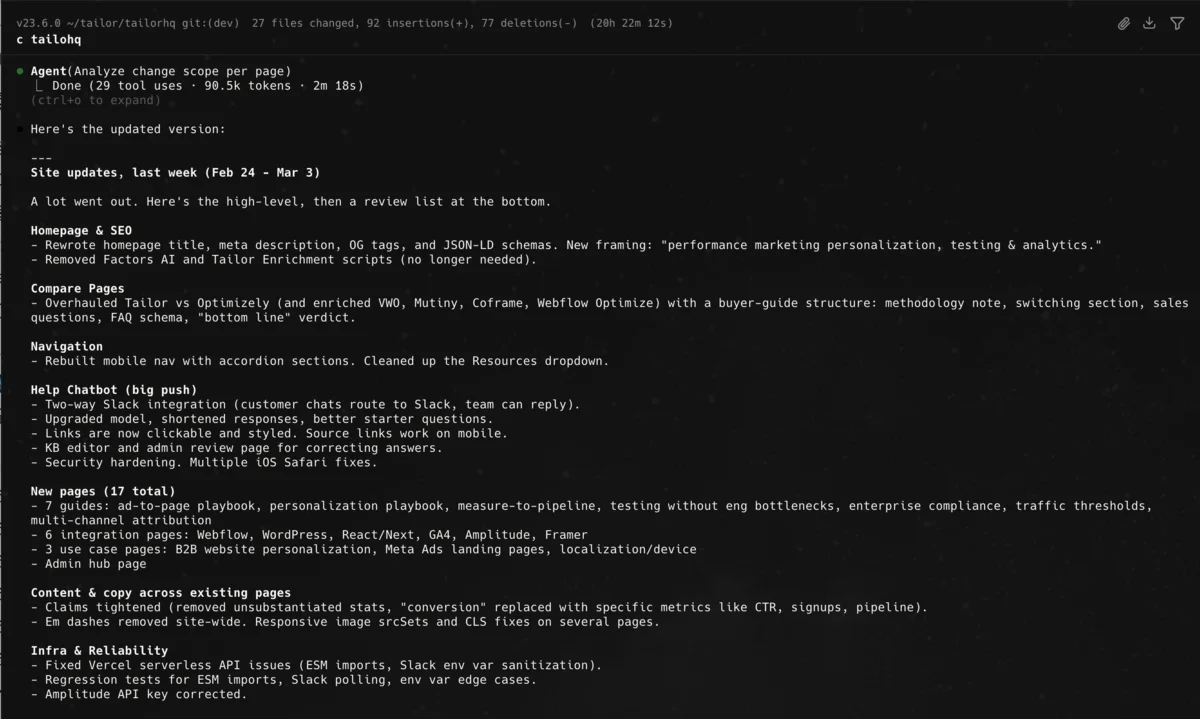

The switch to Vercel took about a day. What came after changed everything.

Claude Code is similar to working with Lovable's agent (maybe they even use Claude behind the scenes), but there are subtle differences that really stand out to an engineer turned CEO and marketer like me.

With Lovable, I'd sometimes hit limits: "Sorry, I can't set up your Content Security Policy as a backend header the way your security audit requires." Or: "Sorry, I can't set up Static Site Generation even though that would improve your SEO." These are reasonable limitations of a prompt-first builder. But once you need them, you need them.

Claude Code works inside the actual codebase. Instead of isolated outputs, it's a collaborator that can work across the system, follow conventions, and make coordinated changes. That's when the project stopped feeling like a marketing site and started feeling like software we could operate and improve.

Once I had it set up, I got addicted to all the things I could build:

- Professional technical SEO infrastructure

- Automated OG image generation for every page

- A lot more content pages, all following SEO/AEO/GEO best practices

- Image optimization and performance improvements

- A help chatbot with a live Slack connection for team support, built from scratch

- SSO-gated admin tooling for the chatbot knowledge base

- Tests around critical behavior

This is the future, in my opinion. Not "AI writes a page." More like: AI becomes a high-bandwidth implementation partner inside your production stack.

One honest caveat: this is addictive

This mode of building has serious "addictive video game energy." The leverage is real. You can move from idea to implementation to improvement in a single sitting. There were plenty of nights where I sat down after the kids were asleep thinking I'd fix one small thing, and suddenly it was 1:00am.

That's not fake productivity. But it can quietly break your boundaries if you let it. The cost shows up in boring, important places: sleep, recovery, patience, family presence.

I think we need to be more honest about both sides: the leverage is incredible, and the intensity is real. The answer is not "slow down." It's: use the leverage, but build guardrails. Clear goals, measurement integrity, prioritization discipline, and human judgment on what matters.

Building pages vs. improving them under live traffic

This framing helped me make sense of what we were actually doing.

Building

(Lovable, Claude Code) helps you create the system faster. Improving under live traffic (Tailor) helps the system perform better while visitors are arriving. It tailors experiences by intent, prioritizes tests, detects issues, and explains what changed.

Both matter. But if you care about CAC, CVR, pipeline, and revenue, run-time AI is where a lot of the business value gets created. The speed of building was useful. The speed of learning what actually worked for different traffic was even more important.

What Tailor actually does on our site

The capabilities I listed earlier are not abstract. Here's what they look like when you're running real paid traffic.

Campaign-specific tailoring.

"Landing page personalization" traffic sees product-led messaging. "Improve paid traffic conversion" traffic sees our traffic leaks angle. "Mutiny alternative" traffic sees competitive positioning. Most paid teams dump wildly different intent into the same page and wonder why CVR is mediocre. That's paying premium CPCs to be vague.

Intent matching across channels.

When we share content on LinkedIn, Tailor adapts the landing experience to match the angle of the post. If the post was about traffic leak diagnosis, the page hits that framing immediately instead of opening with a generic pitch.

A/B testing built for performance marketers.

Tailor includes the core testing and analytics capabilities you'd expect from Optimizely, rebuilt for modern performance marketing workflows. A/B/C testing with statistical significance, click distribution, dwell time, scroll depth, and advanced conversion goals, both on-page and downstream. The dashboarding is opinionated toward what paid teams actually need to see: which variant wins for which segment, and whether that lift carries through to pipeline.

Alerts that catch problems early.

Tailor surfaces campaign-level breakdowns and sends alerts when conversion drops, traffic spikes, or sources go dark. Most teams find out something broke when pipeline is already down.

Enrichment and ICP visibility.

Tailor uses IP-based enrichment to show us which companies, roles, and industries are visiting, and how that traffic maps to our ideal customer profiles. That alone changes how you read your analytics. You stop asking "how much traffic did we get" and start asking "how much of the right traffic did we get." There's also the opportunity to tailor pages to these segments directly. We haven't done much of that at our scale yet, but our customers have, and the results are significant.

Downstream measurement.

Page CTAs are set up in Tailor, and downstream goals (signup, qualified lead, meeting booked) are pulled in from Amplitude. A page that converts more free signups but worse pipeline is not better. It's just better at lying.

Where we're taking it next

The concrete usage above is table stakes. Here's where the real leverage is headed.

Personalize by campaign, keyword, and role.

Not just by traffic source, but by the specific keyword theme, match type, and inferred visitor role. A VP of Marketing sees strategic outcomes (CAC efficiency, budget allocation). A growth manager sees workflow speed (launch tests fast, fewer dev bottlenecks). A founder sees "do more with a lean team." Same product, different buying trigger.

Close the loop to revenue.

We're connecting experiment results and segment behavior all the way through to pipeline and closed-won. Then segmenting that by campaign, keyword, landing page variant, enrichment segment, and company size. That becomes both product value and go-to-market insight.

Next-best-test recommendations.

Instead of blank canvases, Tailor should suggest what to test next: "Traffic from campaign X underperforms on mobile, test shorter hero plus proof above fold." Marketers don't need more options. They need better judgment at scale. The goal is to automatically personalize your whole site to all your visitor intents, with just the right amount of human in the loop.

Ad-to-page message match.

Many paid teams optimize pre-click and post-click separately. The visitor experiences it as one journey. Tailor should connect ad angle to landing page angle automatically, then monitor whether message match actually improved conversion.

Consolidated performance reporting.

Every paid team runs a weekly performance meeting. To prepare, someone duct-tapes data from ad platforms, analytics, CRM exports, and spreadsheets into one view. The data rarely lines up. Attribution models disagree, date ranges don't match, and nothing is apples-to-apples without cleanup. Marketers need clearly attributed performance data in one place, with answers to "what's working," "why," and "what changed this week."

Competitive insights.

The difference between a junior and senior performance marketer is knowing what competitors are doing. What ads are they running? What do their landing pages say? How are they positioning across geos and channels? That context turns weekly meetings from status reporting into real decision-making. Everyone is trying to come up with genuinely new positioning to test, not just regurgitating the same copy slightly differently and hoping for growth.

Ad orchestration.

This one is more exploratory, but the pain is real. Teams spend hours on micro-adjustments. Shifting budget, toggling ads, clicking through hundreds of creatives for small copy edits. Ad platforms don't make it easy to batch-change across campaigns, and they regularly do things like blow through a month of budget in a day. Some of this work is naturally expressed as plain language: "turn off all the ads with dogs in them in EMEA." That's where orchestration should be headed.

A personalization tool changes pages. A serious performance marketing system changes the whole loop.

What I'd tell marketers and founders building this way

Start with generators, then graduate to collaborators.

Prompt-first tools are incredible for speed. But once the site matters, move to a workflow where AI works inside your real codebase and conventions.

Treat your site like software.

If the site drives paid traffic and pipeline, it needs CI/CD, tests, monitoring, and documentation. Otherwise you're building a beautiful liability.

Optimize for learning speed, not just shipping speed.

AI makes creation fast. The bigger advantage comes from faster testing, tailoring, measuring, and diagnosis while traffic is live.

Connect your data before you need it.

Ad platform spend, on-site behavior, and downstream analytics should be connected early. Otherwise your team spends too much time arguing about what happened.

Bottom line

AI didn't make marketing strategy irrelevant. It made execution faster and raised the value of judgment.

The sequence that now makes the most sense to me is:

- Use AI generators to get to a first version fast

- Use AI collaborators to harden the actual system

- Use a runtime layer to tailor experiences, monitor the full traffic and performance picture, and improve outcomes under live traffic

- Use human judgment to decide what matters and where to push next

That mode of building is the future.

It is also more intense than people realize.

If you're building your site this way, especially if you run performance marketing, I'd love to hear what's working, where you've hit the wall, and what you think the optimization layer should do next.